The How and What and Why of 3D Conversion

I have recently worked for a very large cooperation on a very large scale project that involves magicians and sorcerers and a fantasy world. This film was suppose to go to the big screen - like the really really big screen - in 3D - now it will only be in 2D. Sorry for the mangled description I am still under a somewhat restricting Non Disclosure Agreement.

Let me be assured that this particular movie franchise is so big that any misstep is costing the production company likely a couple million bucks. The decision to not release the movie in 3D was made not very long before its release after a lot of very good work has gone into the conversion already. Yes conversion. I am not here to spill the beans on how this all happened on the management side - I also can not talk to detailed about how their particular approach to conversion was done. I just want to answer a couple of more general questions on 2D to 3D conversions. I feel that this is public knowledge and can be talked about (looks over his shoulder to see if an army of lawyers is on his back).

The first question I get asked over and over again is why a movie that big has not been shot in 3D in the first place - as most conversions that have been done looked horrible where eye hurting and generally not good for the general experience (Alice In Wonderland for example).

I have asked this question myself in the team over and over again. There is one simple answer I got back: its not easier for an visual fx heavy film to get produced entirely in 3D then it is for the film to be converted to 3D. Some even said - its more expensive and more difficult to have a complete 3D pipeline from shoot to delivery then a conversion.

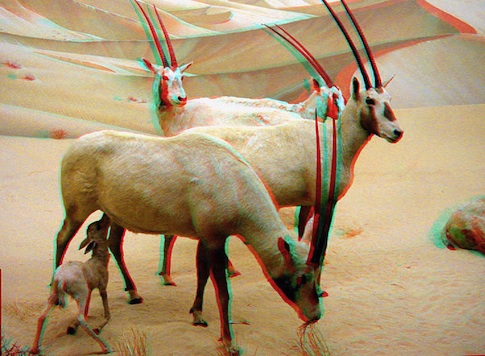

Why is that? For one shooting 3D is not perfect. There are pretty much 3 different ways to shoot 3D and all have a number of problems. You have parallel - having two cameras (or lenses like the new panasonic camera) side by side total parallel. Now for wide shots with no near foreground this works ok - but forget any and all closeup action - you will get out of the movie theater cross eyed. You can mimic what happens here by holding a finger very close to your eyes in the middle of the head.

That can be enhance by actually angling the camera a bit toward each other (making them crosseyed) converging on the point of interest. Since this is all just tricking our eyes believing we see a 3D picture when in fact we only see a 2D picture on the screen this opens a can of worms. What happens with far distance background, how can one quickly and precisly enough change the angles of both camera bodies to adjust for a character coming from far back to the front. Another thing to consider for both techniques is that the further you spread the cameras apart the deeper the image will become - its really interesting how just half a millimeter can totally make the picture unbelievable and break the 3D illusion or just make things look comical.

The third option is the mirror rig. You have two cameras at an angle of 90 degrees to each other (A 3D Rig overview) the image is split between left and right eye by a polarizing mirror. While this approach is more precise as the angulation of both cameras can be set more finely and is mostly used for closeups it has one big drawback - if you polarize you loose reflections, meaning that one eye will not have reflection in it that then later in post have to be painstakingly manually added again (or have the viewer get a headache cause both eyes aint matching the same picture).

Preciseness is the key to all of these approaches - its crazy how our brain actually works in 3D and its not easely fooled or if it is and its not perfectly correct you will have an audience that walks out and takes 10 aspirins and likely never come back to a 3D movie in their lifetime.

Now couple this with no lens on this planet beeing the exact same, every camera can have a billion settings that makes the pictures different, skew of the camera sensors, filters, dust, windgusts, water (like rain) etc etc etc. and you start getting an idea of what can actually go wrong in shooting. On top of all that there is not soooo extremely much you can do with the picture ones its shot. There is a $8000 dollar plugin that lets you adjust some stuff (and help you a tiny bit with the VFX in the next paragraph) but its not magic and it surely is not a cure all.

On top of these problems comes the next. Doing visual effects for 2D is hard enough - especially if you are working on films that are finalized in 4k (4096×3072 pixels or the like depending on widescreen format used). You trick you way around to make cables invisible, paint back hairs where the green screen didn´t work, manually draw masks 24 frames for every second of film to make a robot stand behind a person not in front, adjust colors to perfection so things look right and fit together. Now in 3D you have two pictures that pretty much are the same but aren´t. If you think its just a "copy over" one side to the other and then thats it forget about it. Talking to a fellow compositor who has worked on a 3D movie the full scope came to light - he said (and I might add he is pure genius young compositor - way better then me) - he needs TEN times as much time to finish a shot then he would in 2D - ugh.

Summing that up - the reason why a lot of films are still rather converted from 2D to 3D have to do with no real good shooting options on set, highly difficult compositing and not much leverage to adjust depth after it was shot. Making it more expensive (and not necessarily better) to shoot then to convert.

So how does a conversion work. Well. I think I can say that much its A LOT of manual labour. You basically build the scene that you want to convert roughly in 3D cut out the objects in your scene based on depth then project those objects onto their respective 3d object then render the whole thing from a camera that is of to the side. That leaves you with an occlusion edge that then has to be mostly manually filled in and corrected. Thats mostly a job of making up what would be behind objects. Crazy stuff really do one pixel mistake in this and it all stops working, giving your headaches or looking just outright laughable - mostly its headache so. Bad conversions have hair of characters sticking not on their head but to the back wall, see through object looking like cardboard, weird depth changes when one object switches with another through depth (like two people dancing and swiveling around).

Pros of a conversion: Its cheaper with FX heavy stuff - the director and his team (of stereographers) can adjust depth on the fly in the editing room - a HUGE plus to make the movie coherent (like so your eyes don´t have to constantly adjust to different depth in every scene) and just adjust individual scenes to make them look perfect or to isolate one character or object in 3D space. (this is the thing where 3D shines from a filmmaker perspective)

Do I like 3D? I watched avatar and I didn´t think it has merit. Really thought that its a neat gimmick but nothing more. Having worked on it and stared at a single scene for quite some time and made small adjustments here and there and looked at other scenes I do

think yes as a filmmakers tool it has its place across all genres of film. I actually think that "talking heads" movies can benefit from it almost more then action movies with fast edits. While the latter will have the or other "shock" moment it will break your head with all these fast edits that change depth and your eyes trying to adjust (but having nothing to adjust because they are looking at a 2d image after all) every half second. With talking heads movies you can isolate characters (in a very minimal way but its beautiful actually) far big landscapes will evoke more emotions then ever before and you will be more immersed in the feeling of the film in general. (now I still like the gimmick at points but its really not where 3D really shines).

Oh and don´t judge the 3D conversion thing on movies like Alice in Wonderland or Saw 3D cause that have been bad conversions - it can look much much better - I have seen it and I think next year there will be some very good conversions coming. Even the biggest FX houses still struggling to put up a pipeline for 3D that works - its all tough and eats up money for breakfast like no other new technology and eats manpower for lunch and directors for dinner.

If you have any other question I can try to answer or ask people more in the know then me - just leave a comment.